November 3, 2020

In a world with increasing technological growth, old systems are no longer viable and certainly not sustainable and surgical training is no different, with complex procedures becoming more mentally taxing and strenuous. Surgeons need ongoing training to be kept up to date with the latest innovations that will aid them, more than ever before.

Surgeons normally train by watching experienced surgeons working. There is a gap here where VR can help. It makes sense to watch a 3D representation of the real thing on a headset.

Osso VR has been made possible with Oculus for Business. This package has changed how companies go about creating solutions. It’s never been easier to create an enterprise grade product then it is now.

Justin Barad, MD, seconds this.

He is an orthopedic surgeon and software developer, who founded Osso VR after years of frustration in operating rooms. He states "There were always new medical devices, but we would literally be Googling how to use them while the patient was on the table," and goes on to say. "Learning curve data shows that you have to perform a new procedure 100 times to be proficient. So we often didn't use the new devices for safety reasons – we didn't have time to learn everything well enough."

Osso VR is expanding their training curriculum and VR training in the surgical field is proving its worth. Currently Osso VR has more than a dozen modules in use and is sure set to expand on this.

So there are some promising new technologies to help the health industry. Many new medical devices fail to gain momentum in the growing field of medicine. But Osso’s VR technology coupled with Oculus for Business is a step in the right direction and a must-have moving forward in our technologically advancing world.

Connect with iTRA to discuss your next project.

October 20, 2020

Due in 2021, HP is planning to release their Omnicept Edition of its Reverb G2 Virtual Reality headset. It’s packed with some brand new features, most interesting is the face tracking sensors and its ability to measure stress levels and cognitive load of the user.

How good would it be for a lecturer to know how their students are dealing with the learning process? Having this information would help companies fine tune their approach and learning content.

HP’s upcoming VR headset is set to do just this. Using four cameras it can sense expressions, heart rate and eye movements. HP pitches “foveated rendering, this HMD delivers lifelike VR like never before”. This is quite a step forward in the VR world, there is some speculation about how developers will use this sort of biometric data. Gaining knowledge of the users reactions helps create better courses and content.

HP is looking forward to see how their new technology will be used and says “By capturing user responses in real time, you can generate insights and adapt each user’s experience,”

The developers’ tools that come with the headsets can show how each user is dealing with the “cognitive load”. This means the lecturer can see how students are faring in the VR world that has been created. It’s really good to know how well the user is dealing with the content they are given. With this technology you are able to see what parts of a VR experience the student is liking and what they are not.

HP says “HMD firmware safeguards sensor data at every moment of capture and no data is stored on the headset. HP Omnicept powered applications help ensure the capture and transfer of data comply with GDPR and keep user data confidential”.

Currently HP are offering their Reverb G2 PC-powered headset without the Omnicept sensing capabilities for around $600 USD. They made this original headset in partnership with Valve and Microsoft with 2160 x 2160 resolution per eye, which is quite good by today's VR standards. New orders for this device are expected to ship by December.

Connect with iTRA to discuss your next project.

October 13, 2020

It’s true that dogs are a man's best friend, this is especially true with the armed forces. Military working dogs are used for scouting and finding explosives, along with other reconoscence missions. It is essential that these dogs get commands from their handler to keep them safe and to help the dog along with the mission.

Just think, giving Augmented Reality technology to working dogs sounds like a great idea. One of the best things about using AR to guide military dogs is that they are already wearing military goggles for eye protection. The Army Research Office, part of the U.S Army Combat Capabilities Development is working on this new technology. AR is being developed for dogs with the aim of commanders being able to help get their companions where they need to be. This also helps soldiers keep out of harm's way as they can be further distanced from their canine companion.

Not only this but it’s bridging the communication gap between human and canine. The need for better communication between human and canine is well known and AR can possibly bridge this gap.

An example is when the canine cant see their trainer, they might lose focus and become disoriented and off task. This gives a permanent link between canine and human.

Dr. A.J. Peper founded Command Sight in 2017, after identifying the need for better human-animal communication on the field. Peper was surprised by initial feedback from his proof of concept, “the system could fundamentally change how military canines are deployed in the future.”

The AR tech that is being trialed is specially designed to fit the canine's shape and needs. There is a visual indicator that shows the dog directions as to where he needs to go, he is reacting to visual cues in his goggles.

The handler now has the ability to see exactly what the dog sees through the AR headset

“Augmented reality works differently for dogs than for humans,” said Dr. Stephen Lee, an ARO senior scientist. “AR will be used to provide dogs with commands and cues; it’s not for the dog to interact with it like a human does. This new technology offers us a critical tool to better communicate with military working dogs.”

For now, the prototype used is wired, similar to being on a leash for the dog. Researchers are currently developing how to do this wirelessly for their next stage of development

“We are still in the beginning research stages of applying this technology to dogs, but the results from our initial research are extremely promising,” Peper states. “Much of the research to date has been conducted with my rottweiler, Mater. His ability to generalize from other training to working through the AR goggles has been incredible. We still have a way to go from a basic science and development perspective before it will be ready for the wear and tear our military dogs will place on the units.”

The research conducted focuses on how the canine eye perceives the world.

“We will be able to probe canine perception and behavior in a new way with this tool,” Lee said.

Military working dogs are currently directed by hand signals or laser pointers, which works well when there is line of sight. The issue arises when the canine can no longer see the handler, this can become a safety issue not only for the canine, but the handler too.

Equipping Augmented reality goggles to the worker dog makes sense doesn't it? This could offer special forces and their canines companions a new way to communicate.

“The military working dog community is very excited about the potential of this technology,” Lee said. “This technology really cuts new ground and opens up possibilities that we haven’t considered yet.”

It’s not a huge step to put AR technology into what they are already wearing. It makes adoption of this technology cheap and effective. It’s set to greatly improve communication between canine and handler.

“Even without the augmented reality, this technology provides one of the best camera systems for military working dogs,” Lee states. “Now, cameras are generally placed on a dog’s back, but by putting the camera in the goggles, the handler can see exactly what the dogs sees and it eliminates the bounce that comes from placing the camera on the dog’s back.”

The researchers have planned to spend another two years refining the product and making it wireless. The Army Research Office is proving additional funding for the next phase of development.

Connect with iTRA to discuss your next project.

September 30, 2020

Oculus for Business is a Virtual Reality platform for enterprises, providing software and a structure to manage VR deployment with a tailored experience. Most importantly, it gives organisations control over a fleet of headsets, including enterprise-grade customer support provided by Oculus. Oculus’ business solution offers a great user experience as well as cutting edge data security and privacy.

The first thing you need is adequate hardware to support the VR devices and the solution. See our last blog post on the upcoming Oculus Quest 2. Also needed is the ability to manage your VR deployments. Oculus’ platform gives control, ability for good integration and content to run on the headsets.

If you lack the internal team to set up and deploy Oculus for Business on your devices, or don’t even have your devices yet, Oculus has made it easy to have a third party company, such as iTRA design and deploy your VR experience, while you maintain all the control.

What can your business gain from switching to Oculus for business? VR technology is proven in training. VR can give a more hands on feel with prototyping new products. It can also be a cool way for co workers to interact.

Oculus for business gives your company the ability to manage the fleet of devices and Oculus provides decent technical support for the VR solution. Oculus knows It can prove costly to call in IT experts to help with maintenance, so they have engineered their platform for ease of setup and maintaining the fleet.

Oculus for business has been designed for devices to remain on premises, nothing worse than workers taking company devices home and downloading games or other trivial things.

Oculus recommends having a good approach to implementation of their product. Appoint someone who can be responsible for driving the solution. Organisation is key for getting this product to work for your company, this will improve efficiency and reduce cost of implementation and resources spent.

VR is coming of age and appears to be a great solution for training and other company projects, whether it’s prototyping, showcasing new products, or if it's training in a safe, socially distanced environment. Not only should this technology make your company more efficient, but your workers will love the new technology and trying out the new gear. The best thing is that Oculus for business gives your company more control over its own assets.

Connect with iTRA to discuss your next project.

September 22, 2020

We are living in uncertain times, the outside world is dangerous and Virtual Reality seems like a good idea. When needing to keep our distance from others, VR becomes a good option in theory, but headsets can feel clunky and at times a bit of an unnatural experience. The Oculus Quest 2 is heading in the right direction, being self-contained and with its improved screen and a reduction in weight. Due to be shipping on October 13th

While the Oculus Quest 2 has kept a lot of the original features that have worked, like the stand alone design, there have been improvements made in other areas that were needed, like a reduction in weight, improved screen and as a whole feels more comfortable.

The original Quest was known for its sleek all black appearance, but Oculus Quest 2 has gone for a lighter colour scheme with its pure white body and black foam face mask, which looks great giving it a two toned appearance. Oculus have kept the same rounded plastic front end, with each corner supporting outward-facing tracking cameras.

The first Quest headset felt quite front heavy, the new unit is somewhat lighter. Also Oculus has opted for a more comfortable cloth strap and also offers an alternative strap option.

Oculus are still using an LCD screen in their Quest 2, but the resolution is higher, 1832 x 1920 pixels to be exact. Refresh rate is up to 90Hz which will mean there can be up to 90 frames per second, which makes for a smooth, crisp experience.

Oculus’s Quest 2 uses Qualcomm’s Snapdragon XR2 chipset, which on paper is 2x stronger than Snapdragon 835 used for the original Oculus Quest. This is helping to run richer content with higher resolutions, making for a greater experience. A more efficient processor that can handle the job better will save power consumption, so the batteries will last longer before needing to recharge.

Quest 2 has more memory going from 4GB the original headset to 6GB. The device has greater storage capacity going from 64GB to 256GB. These upgrades have been achieved over the predecessor while being cheaper, costing only $299 instead of $399 USD

In the way of audio not much has changed from the original headset, still using small directional speakers, this can be more comfortable for the user than wired headphones or earbuds. One of the drawbacks of this is that the sound isn't just confined to the user, it can be audible to people who are in the same room, which would be distracting for the other person. The overall sound quality is still adequate.

The Quest 2 is using the third generation of Oculus Touch, providing you with two plastic remotes with a good grip and nice tactile feel. The controllers use a grip button and trigger, two face buttons and an analog stick for each. Oculus have reintroduced the thumb rest, which makes for a better controller feel.

The controllers use two AA batteries for power, rather than internal unchangeable rechargeable batteries. The reason for this is because there's no real easy way to plug the controllers into a charger, it ends up being easier to replace the batteries when they run out of charge. Oculus have improved the efficiency of their controllers, which means the batteries will last longer, 4x longer Oculus say.

VR is a technology that can take you right to your workplace wherever you are. Virtual workplaces are fast becoming a normal way of life, especially in a time where there are restrictions on how close we are able to interact. Still this technology has a fair way to go for a fully comfortable experience for the user, but the Oculus Quest 2 is certainly a step in the right direction. VR is feeling more and more comfortable to the user as technology improves.

Connect with iTRA to discuss your next project.

December 10, 2019

As Augmented and Virtual reality technologies mature, they are becoming a viable option for enterprise training. However there is still a way to go before these technologies become commonplace in most business scenarios.

J.P. Gownder, vice president at Forrester states, "Right now, we mostly remain in the early testing phase for AR and VR, but employee training is becoming a more common scenario"

Walmart and UPS are a couple of companies who have rolled out VR training programs. This is helping their new employees master their jobs faster and with higher quality and safety. At UPS, new drivers will use VR headsets to simulate city driving conditions during training. Large mining companies are using AR to help workers identify and fix problems with equipment, in factories or out in the field.

VR has had a huge impact on the customer side of things mostly due to gaming. Enterprise AR adoption is ahead of consumer AR in terms of maturity as said by Tuong Nguyen, who is principal research analyst at Gartner.

He goes on to say "We're really in the adolescence of AR and VR. We've had some time to test it, but it's still in its teenage years, so there are some growing pains to be expected. But we're already starting to see its potential."

The top three enterprise AR use cases right now include video guidance, design and collaboration and task itemization, according to Nguyen.

"For training, it's helpful for situations that are high risk" Nguyen said. "If it's expensive or dangerous to have someone training in a live environment, but you want them to at least know the muscle movement and the decisions they will need to make, you can have them do it in a virtual space, rather than in the physical world." Nguyen states this could include scenarios such as military surgery training, combat training or other emergency response training.

There are a number of products on the market aimed at enterprise adoption of VR/AR technology. A couple of examples are HTC Vive Pro and the Oculus for Business bundle. These can be used for enhancing worker productivity and job training in fields such as manufacturing and design, retail, transportation and healthcare.

Nguyen says "For any business, when implementing tech, it's either making you money or saving you money," and follows on to say "When you talk about employee training and use of immersive tech, it tends to be some type of cost savings, whether in the form of less accidents, higher accuracy rates, or fewer mistakes. That's the kind of benefit that the CIO should be expecting." With this being said, because these are interface technologies, success depends mostly on the task", he added.

Nguyen recommends doing some research and testing to see how these technologies can apply to your company. "Think about how it applies to solving certain problems, as an extension of tech" Nguyen said. "Don't just bring it in and say, let's see what we can do with this.”

The entertainment industry is still the main user of these technologies. Todd Richmond, IEEE member and director of the Mixed Reality Lab at the University of Southern California says "the bigger play long-term are the business verticals" and "Medical and education are going to outstrip entertainment with regards to uses for VR and AR."

The biggest use for AR/VR technologies in the future will be around telepresence, Richmond said. "It's the promise that has been on the horizon, of being able to telecommute in ways that are meaningful and productive," and states, "We're still not there yet. The immersive stuff is a new medium for communication and collaboration, and it takes time to figure out how to use a new medium effectively."

Richmond recommends that companies who are interested, seek out academic conferences to learn more about how it could fit into their current business structure. Richmond says "The trick for the enterprise is going to be figuring out when to make the leap, and when things are mature enough to move from it being a curiosity, and a set of experiments, to being a core part of their business"

Connect with iTRA to discuss your next project.

November 19, 2019

![]()

While we wait for Oculus to release the Finger Tracking SDK for the Quest, I’m playing around with HTC Vive’s implementation of hand and finger tracking to get a feel for the new input method and what advantages it unlocks!

Watch this space, more videos coming.

Connect with iTRA to discuss your next project.

October 17, 2019

The Oculus Quest was released in May last year with a VR experience surpassing its rivals, all contained within a wireless portable headset. Since its release we have seen numerous improvements, including new features and monthly software updates. Oculus has been progressively improving their Quest headset to give their customers the best VR experience possible. Recently at OC6, it was announced that a lineup of new features for the Quest will be coming. It’s expected that this will unlock the full potential of the Quest and will further expand how the user interacts with their content.

Controlling a VR world has never been so simple, with hand tracking set to raise the bar, no longer do you need to use controllers. The need for use of external sensors, gloves or PC’s are no longer required. What does this all mean? It means its enabling the user to have a much more natural interaction with their VR environment, being a far more immersive experience.

A new feature that is creating excitement for anyone who uses this technology is the Oculus Link. This is a new way to access Rift content on Quest headsets. Beginning in November, those who own a Quest and a PC will be able to access any Rift library with the Oculus Link software. You will be able to do this with any USB 3 cable but soon Oculus will be releasing a high performance optical fiber cable to give its customers the best experience possible.

With Oculus Link it is now much easier to train workers on a large scale, and the interacting between trainees and teachers can be more valuable and much more fulfilling. With everything Oculus have updated and the effort they have put into their headsets, creating a much more immersive experience than VR technology has previously provided.

Connect with iTRA to discuss your next project.

October 3, 2019

![]()

The Oculus Quest has something new in store, its hand tracking and its set to change how we use VR. Many users of VR in the past have had difficulties with control and natural feeling of movements. This is about to change with the Oculus Quest. Coming in November is their new hand tracking technology. This is going to allow the user to be more immersed in VR and connect on a much deeper level, which no doubt will improve the VR experience.

All users of VR, new and old, will benefit greatly from this leap forward, as the experience is going to feel more natural. Hand tracking on the Quest will also reduce the difficulty in learning for people new to VR, and those who are not familiar or comfortable with gaming controllers. Probably the greatest benefit is that you no longer have to feel around for that controller you dropped while being completely immersed in the VR experience.

The Quest’s hand tracking technology was showcased at OC6. It is expected to be launched in early 2020 as an experimental feature for consumers. Developers of VR apps will be able to create products using hand gestures to control their experiences. The Quest community will be able to trial this new technology early next year to get a feel of what's coming and how this will improve the experience.

This project started out at Facebook Reality Labs and has eventually turned into a great product to allow new VR input. Oculus’s computer vision team developed a new way of using machine learning to work out, in real time, where the users hands are and the position of the fingers. This is accomplished using the original monochrome cameras found on every Oculus Quest headset. Oculus didn’t need to use depth-sensing cameras, additional sensors or more processing power.

This technology is an important milestone for VR training. The trainee can have their hands free from controllers which can aid in the learning process, as the experience will feel more realistic. In the future, it is expected that this technology will allow the user to pick up objects and use them as they would in the real world.

Bringing your hands into the VR world without the need for controllers is ground breaking. It will allow the user to feel much more comfortable in using VR. In training it is extremely important that the trainee feels comfortable in the way they are learning, as the trainee is much more likely to retain what they have learnt. VR is becoming the go to for all sorts of different training scenarios.

Connect with iTRA to discuss your next project.

September 19, 2019

“We always overestimate the change that will occur in the next two years and underestimate the change that will occur in the next ten. Don't let yourself be lulled into inaction.”

- Bill Gates

We were delighted to present the “Industry Perspective” at the inaugural XR.Edu Summit held at Hale School this week. iTRA’s Director, Mark Broome,showcased our experience and innovations to the room of educators giving them a perspective on the business cases for Extended Reality in the real world. We spoke with a number of people afterwards who recognised the value of giving students exposure to #VR #AR #XR and giving them projects with tangible applications.

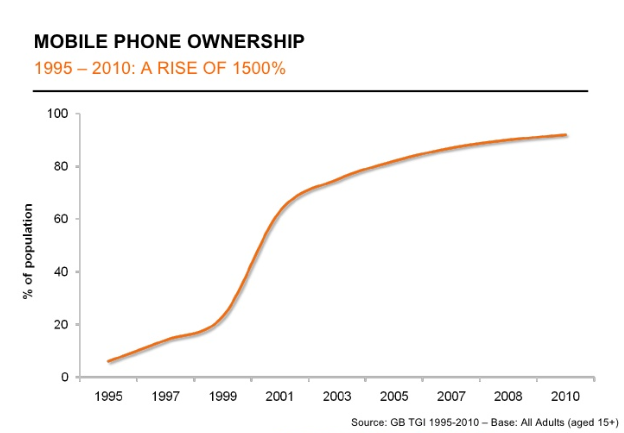

Mark predicted in the next few years, as the hardware cost reduces and business cases become clearer, we will see an exponential rise in the use of XR applications across all industries. He rammed home his point with the example of mobile phones to highlight the adoption rate of technology when the elements align:

As early developers iTRA is fortunate to have long term clients that recognise the benefits of including VR training in their suite of tools to improve the skills of their workforce. We have been developing XR applications since 2017 and continue to identify areas within our clients’ businesses where tangible improvements can be made with VR or AR applications.

Our experience shows that VR is without doubt an ideal training tool for immersion in high risk work environments and a cost-effective alternative for all kinds of training for physical activity, such as use of fire extinguishers, driving, identification of objects, etc.

AR has enormous potential for improving efficiencies in operations and this has been proven by major companies who have adopted the technology, such as DHL, Toll, and Boeing. Our AR Tagging App is just one application, but AR in Inspection & Maintenance, Operations Training, Remote Collaboration and Working Guides are all areas where AR will save both time and money.

The XR.Edu Summit presentation was well received by the audience who were looking for inspiration to produce graduates who are ready for the real world – in an extended reality space.

Please contact us for more information on the use of XR applications within your organisation.

Connect with iTRA to discuss your next project.

Resources

Terms and ConditionsABN: 67119 274 181